Challenge

Enterprises in the financial sector today face the challenge of cost pressure and expectations of an outstanding service by their clients. Almost all larger institutions have to decide whether machine learning applications are developed inhouse or are purchased from third parties. Third party applications often appear as black boxes or at least as (dark) grey boxes and it is extremely challenging to verify the performance or security of these applications or even their behaviour in case of extreme scenarios.

Example

You decide to buy a third party’s chatbot service to use it in your customer relations management software. The application you buy lets you train a chatbot using your own data to better serve your needs. However, state of the art natural language processing (NLP) machine learning models may leak private data. Even without realizing anything, some sensitive information regarding your customers may leak from the chatbot.

Approach

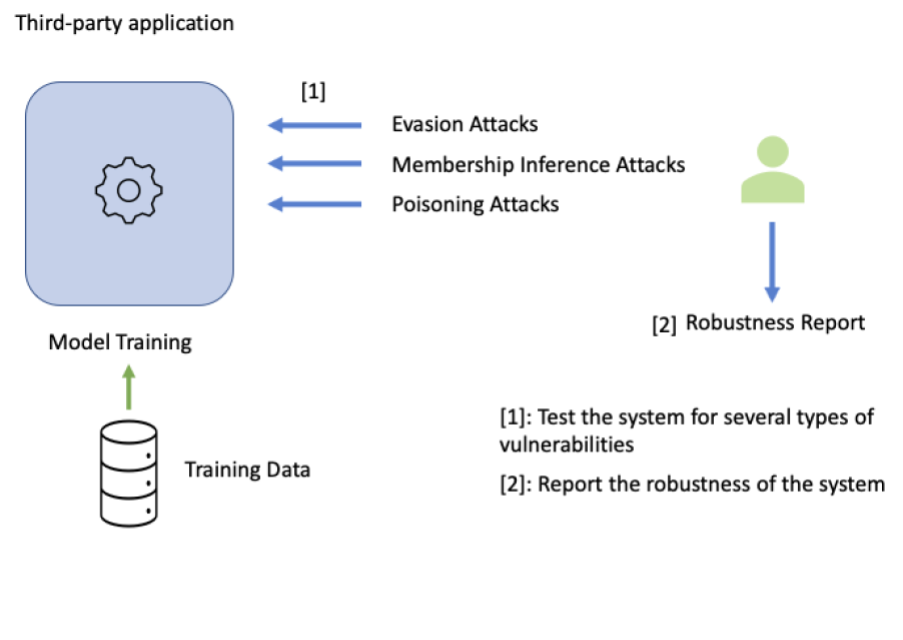

With our expertise, we apply state of the art techniques to check the vulnerabilities of the blackbox and third party AI systems. The requirements to enable the full check of the system is a given accessibility to the system. Our approach consists of the following steps:

- We feed the application with many different attack cases and get the prediction back from the application. Specifically, we check for:

- if the application is vulnerable to membership inference attacks (leaking of the training data).

- whether the poisoning attacks are effective for the models developed by the application.

- How much evasion attacks are easy to deploy against the application and how severe is the risk.

- We report the vulnerabilities of the application. As a result, our client gets a detailed report and evaluation of the third party application. This is tremendously important to judge the security level and trustworthiness of the application.

- Our client can decide on continuing with the application, request improvements from the provider or even stop using the application.